Core HR Agent

What Is an Intelligent Agent in AI?

- 03 April, 2026

- Max 10 min read

Ami Tran

Ami Tran

Table of Content

McKinsey’s latest State of AI report notes that 88% of organizations now use AI in at least one business function!

That number can be seen as the applications of artificial intelligence and intelligent agents are no longer side projects. It has quietly become part of day-to-day operations.

You can see it in customer support, fraud monitoring systems, supply chain dashboards, hiring platforms, and product recommendation engines. AI shows up in practical, often invisible ways. But underneath many of these systems is a specific component that actually drives decisions: the Intelligent Agent.

In this article, we will break down the AI agent definition, compare AI agent, chatbot, and AI assistant, explain how the perceive–reason–act loop works, and review the different common types of AI agents. We’ll also look at real-world applications across industries, and compare the benefits and limitations

Redefining Expense Approval Speed in 2026

See how modern benchmarks are shifting from manual cycles to touchless, AI-driven approval workflows.

What Is an Intelligent Agent?

An intelligent agent is a system or computer program that can observe what is happening around it and decide what to do next in order to reach a goal. Instead of waiting for user inputs every step, the system can interpret inputs, make a decision, and carry out an action. In many AI systems, the agent in artificial intelligence is the component that turns analysis into actual behavior.

Below are what an intelligent agent can do:

- Observe its environment by collecting data or signals from available sources.

- Interpret incoming information so it can understand what is happening.

- Evaluate possible actions and decide which option best supports the goal.

- Take action that changes the environment or produces a useful outcome.

- Act with some level of autonomy, without requiring constant human input.

Improve over time by adjusting its behavior based on past results or feedback.

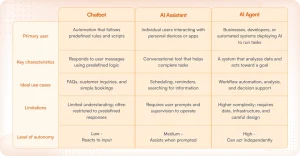

AI Agent vs. Chatbot vs. AI Assistant

The 3 terms get used interchangeably, but they describe meaningfully different technologies. A chatbot does one thing well: it responds to messages, which means you can ask it a question and get an answer right away.

AI assistants are more advanced than chatbots. They can actually do things on your behalf, such as book a meeting, pull up information, and interact with other apps. They’re still reactive, because they wait for you to ask before they do an action.

AI agents are where the real shift happens, it’s all about autonomy. They don’t just respond or execute on request, they’re built to pursue a goal. That means analyzing a situation, deciding what action makes sense, and following through, often without someone prompting each step along the way.

Below is the comparison chart for chatbot, AI assistant, and AI agent:

How It Works — Perceive–Reason–Act Loop

An Intelligent Agent works in a loop, it keeps taking in signals, interpreting them, deciding what to do next, and acting on that decision. This cycle is commonly described as Perceive → Reason → Act, with feedback feeding the next round. That loop is what allows an agent in artificial intelligence to handle situations that change from moment to moment instead of simply executing prewritten instructions.

To make this easier to picture, consider a customer support AI agent handling a billing ticket. A customer writes: “I was charged twice this month.” The agent reads the message, checks the customer’s account, decides what likely happened, and responds.

Below are the 4 stages of the loop:

1. Perceive: Taking in the Situation

The first step in perception is gathering information from the user. The agent collects information from its environment, such as user messages, account data, past conversation history, and system records.

Taking the example of billing above, the agent first receives the customer’s message: “I was charged twice this month.” At this stage, the system is not examining the problem yet. It simply collects the available context: the customer’s account ID, recent transactions, and the conversation history tied to that support request.

2. Reason: Figuring Out What It Means

Moving to the next step, the system interprets the information it just collected. Models or decision logic help the agent determine what the issue likely is and what actions are possible.

From the recent example, the agent compares the customer’s message with the transaction history. It detects two charges posted close together within the same billing cycle. Based on patterns it has learned, the system would know that this could be a duplicate charge or billing error.

3. Act: Carrying Out the Decision

Once the agent determines the best option to resolve the issue, it executes it. The action might be a response to the user, a system operation, or a workflow trigger.

In this scenario, the system retrieves the relevant billing details and sends a response to the customer explaining the charge error. If the system confirms that a duplicate payment occurred, it can also trigger a refund workflow or create a support task for a human agent to support with the case.

4. Learn: Improving from the Outcome

When the issue is resolved, the system records what happened and how the issue was handled.

Finally, if the refund successfully resolves the ticket and the customer confirms the problem is fixed, that outcome becomes a signal the agent can learn from. Over time, similar billing tickets can be recognized faster, and the agent can choose the correct resolution with greater confidence.

5 Types of Intelligent Agents

There are different types of AI agents; they can be different in the way they output their action, to how they process their thinking when required to perform an action in their environment. Some agents are very simple and only react to what they detect at the moment. The other agents try to reach the goals by comparing outcomes or even improving their behavior over time.

Below are the 5 common types of agents and examples we often see in our daily lives:

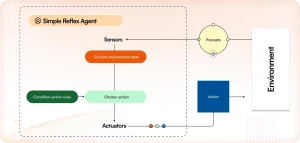

1. Simple Reflex Agents

Simple reflex agents have the most simple way that an agent in artificial intelligence can make decisions. When the agent detects a specific condition in the environment, it performs a predefined action.

The agent does not think about past or future actions. It only checks what is happening right now and follows a rule that tells it what to do. In other words, the decision is immediate and reactive.

Example: A spam filter in an email system. When the system detects certain suspicious words or patterns, it automatically marks the message as spam without analyzing the broader context.

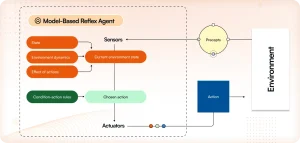

2. Model-Based Reflex Agents

Model‑based reflex agents have a small internal model of the environment. Instead of relying only on what they can observe at a single moment, these agents maintain an internal model of how the world works.

This internal model helps the agent keep track of how the environment changes over time. Even when some information is missing, the system can estimate what is happening based on what it has already observed.

Example: A robot vacuum cleaner that builds a map of a room while cleaning. By remembering walls, obstacles, and areas it already covered, the system can navigate more efficiently.

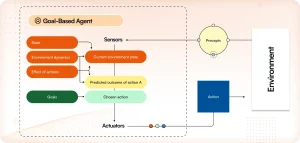

3. Goal-Based Agents

Goal-based agents employ a more considered decision-making process. Rather than responding instantaneously, the agent evaluates various potential actions, determining which will most effectively advance it toward a specified goal.

Consequently, the system must anticipate the consequences of its actions prior to selecting a course of action. Through the comparison of potential outcomes, the agent identifies the option that most effectively facilitates the attainment of its objective.

Example: A chess AI that evaluates many possible moves before choosing the one that improves its chances of winning the game.

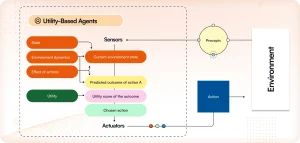

4. Utility-Based Agents

Utility‑based agents are capable of comparing how good different outcomes are. Sometimes multiple actions can reach the same goal, but some results may be more desirable than others.

To handle this, the agent examines each possible outcome and assigns a value to it. The system then chooses the action that produces the highest overall benefit.

Example: A logistics routing system that evaluates different delivery routes and selects the one that balances travel time, distance, and cost.

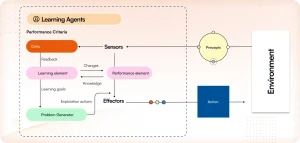

5. Learning Agents

Learning agents introduce the ability to improve through experience. Instead of relying only on predefined rules, these agents adjust how they behave as they interact with their environment.

Over time, the system examines the results of its actions and learns what works better. This allows the agent to gradually make better decisions and adapt when conditions change.

Example: AI assistants powered by large language models that improve their responses over time through training data and user feedback.

Real-World Applications

Most executives encounter intelligent agents long before they realize it. They’re not always labeled as AI, sometimes they’re just “the system that handles ticket routing” or “the tool that catches fraud.” The names and how we describe it may varies but the underlying capability is the same.

Here are a few industries where agents in artificial intelligence are already doing meaningful work.

1. Customer Service

AI agent can handle the repetitive tasks for the support team such as billing questions, account resets, order status. Agents read the request, determine what’s needed, and either resolve it or send it to the right place. The team ends up spending their time on the cases that actually need judgment.

2. Finance

Fraud detection used to mean reviewing transactions after the fact. By then, the money’s usually gone. Agents watch in real time, and when something looks off, like the same card being used on two continents within the same hour, they act immediately. Flag it, pause it, notify someone. The window between “suspicious” and “confirmed fraud” closes fast.

3. Healthcare

The problem in most clinical settings isn’t a lack of information, it’s too much of it coming in at once. Agents help by sorting incoming cases based on reported symptoms and patient history, making sure the urgent ones surface quickly rather than sitting in a queue. It doesn’t replace a clinician’s judgment. It just means that judgment gets applied where it’s actually needed with much more faster.

4. Logistics

A route that made sense at 7am often doesn’t by 10am because traffic changes, a delivery runs long, or priorities shift. Agents handle that recalculation continuously so drivers aren’t working off a plan that’s already stale. Fewer errors, less fuel wasted, less time spent on the phone sorting out what went wrong.

5. Cybersecurity

Security teams are stretched, and manual monitoring at scale isn’t realistic. Agents run in the background watching for anything that deviates from normal, such as odd login times, data moving when it shouldn’t, or access from unexpected locations. They don’t replace the team; they make sure the team is looking at the right things when it counts.

Benefits and Limitations of Intelligent Agents

Intelligent agents solve real problems, but they’re not the right fit for everything. Knowing where they perform well and where they don’t is what separates a good deployment from an expensive one.

1. Benefits of Intelligent Agents

The most immediate advantage is speed and volume. Agents can process large amounts of data and act on it faster than any human team, which matters in environments where delays have real consequences, such as fraud detection, network monitoring, and logistics rerouting.

They also get better over time. Many agents adjust based on new data and feedback, which means the system handling decisions six months from now will generally be more accurate than the one you deployed on day one.

And once they’re running, they largely run themselves. That’s the practical appeal for most organizations: a system that monitors, analyzes, and acts without someone needing to supervise every step.

2. Limitations of Intelligent Agents

Agents are built on patterns. In stable, predictable environments, that works well. In situations that fall outside what the system was trained on, performance drops. They don’t improvise the way a person does when circumstances shift unexpectedly.

There’s also a resource consideration. Advanced agents aren’t cheap to build or operate. Computing costs can add up, particularly for organizations running complex models at scale.

And perhaps most importantly, if the data or rules behind an agent carry bias, the agent will carry it too. Without proper monitoring, those errors compound quietly over time. It’s one area where human oversight isn’t optional; it’s necessary.

Conclusion

At a high level, intelligent agents run on a straightforward loop: perceive, reason, act, and learn. They observe what’s happening, make sense of it, take action, and use the outcome to inform what they do next. That cycle is what allows them to handle dynamic, shifting environments rather than just executing a fixed set of instructions.

As large language models continue to mature, agents are becoming the practical layer between people and the digital systems they rely on. Instead of manually navigating software or managing workflows step by step, teams will increasingly hand that work to agents that can interpret requests, interact with tools, and follow through — with minimal back-and-forth.

For organizations actively looking at AI integration, agent-based systems are quickly moving from emerging concept to standard architectural consideration.

If you’re thinking about where intelligent agents fit in your own operations, Blazeup is built with that transition in mind. If you’d like to explore what agent-based automation could look like for your team, we’re happy to walk you through it. Get in touch to set up a consultation.

Frequently Asked Questions

Recent Blogs

Learn how AI Agents automate tasks, unify your data, and surface insights that drive real results.